The coming shift in CloudOps AI

AI dominates headlines and conference agendas. Most of the attention is on copilots (agents that are assisted by humans) and productivity gains. Faster code. Better answers. Less manual work per engineer.

That progress is real. But in CloudOps, copilots are only the opening move.

Today, most AI in operations explicitly require a human in the loop. The system analyzes state, identifies risk, or proposes an action, then waits. A human reviews the recommendation, supplies missing context, and decides whether to proceed.

This pattern shows up in tools that:

- Review infrastructure and configuration changes

- Recommend actions based on cost, reliability, or risk signals

- Assist with bounded operational workflows that still require approval

This human-in-the-loop approach (HITL) is essential today because people do not trust agents to act autonomously in production. That skepticism is warranted. Operational systems are complex, high stakes, and full of exceptions.

Humans constantly fill in gaps the AI cannot see. Institutional knowledge. Historical edge cases. Implicit constraints. An understanding of which dependencies actually matter and which failures are routine. The agent appears effective because a human is quietly providing the missing pieces.

Here is the critical risk. The agent will fail the moment the human is removed if the context they supplied was never preserved.

An agent that performs well during HITL does not automatically become safe when autonomy increases. If the reasoning, tradeoffs, and relationships applied by humans are lost, the system will regress as soon as it operates on its own. The same predictable failures return, only faster and at greater scale.

This is why the shift from copilots to autonomous CloudOps agents is not a problem of incapable AI models. It is a context problem.

To understand why this context is so hard to capture, and why it keeps leaking out of tools and processes, we first need to be clear about what CloudOps actually is.

What Is CloudOps?

CloudOps is often described through its tools and practices. Monitoring systems, CI/CD pipelines, cost dashboards, security scanners. That description is accurate, but incomplete.

Those tools are how CloudOps operates day to day. They surface signals, enforce constraints, and carry changes into production. But they do not, on their own, explain how decisions are made when adverse conditions arise, such as conflicting signals, outages, or cost spikes.

CloudOps also includes a decision layer that governs how cloud systems change over time. This layer lives in human expertise and is not encoded in tools or applications.

The human layer is where reliability, cost, and risk are weighed against each other. Where tradeoffs are debated. Where exceptions are justified based on business context, delivery pressure, or operational reality. Some of this context is written down in tickets or documents, but much of it remains tacit. It exists in conversations, institutional memory, and judgment calls made under real constraints.

That context is as much a part of CloudOps as the systems that execute changes. Ignoring it leads to a distorted view of how cloud environments actually operate.

In practice, every meaningful cloud decision sits at the intersection of three concerns.

- DevOps prioritizes delivery speed and reliability.

- FinOps focuses on cost, efficiency, and resource utilization.

- DevSecOps centers on risk, compliance, and governance.

No operational change belongs cleanly to just one of these domains. A scaling decision affects cost and availability. A deployment strategy impacts velocity and security posture. A cost optimization can introduce reliability risk or operational toil.

CloudOps is where these perspectives are reconciled. Not perfectly, but continuously.

As cloud environments grew more programmable, more dynamic, and more financially transparent, this coordination became harder to sustain informally. The tools evolved quickly. The decision context did not consolidate in the same way.

Why CloudOps Became Fragmented

CloudOps fragmentation was not caused by bad tooling or organizational failure. It was the predictable result of removing long-standing constraints faster than we replaced them with new systems.

Before the cloud, infrastructure was effectively immutable. Hardware could change, but only slowly and deliberately. Procurement cycles, physical access, and manual processes enforced discipline. Sysadmins focused primarily on updating software, while hardware remained a stable foundation.

Early cloud platforms broke that model by virtualizing hardware. Infrastructure became elastic and on demand. You could create, resize, and destroy environments in minutes rather than months. But operating models were still inherited from the data center. Teams were suddenly working on a mutable substrate using mental models designed for physical hardware.

Infrastructure as Code and cloud APIs accelerated this shift even further. Now both hardware and software could be programmed. Change became cheap, fast, and continuous. Environments were no longer static backdrops for applications. They became living systems, constantly evolving alongside code.

This is where coherence broke down. As mutability increased, so did the number of failure modes. Every change now had simultaneous implications for reliability, cost, and risk. No single team could reason about all three dimensions at once. Specialization followed naturally.

DevOps focused on delivery speed and uptime. FinOps emerged to control continuous, usage-based spend. DevSecOps took ownership of identity, policy, and compliance embedded directly into infrastructure. Each discipline was rational. Each built its own tools, metrics, and workflows.

What never emerged was a shared system that connected these perspectives into a single operational view. Decisions that spanned teams were coordinated manually through reviews, tickets, and meetings. Context lived in people rather than platforms.

That gap is survivable when humans are doing the coordination. It becomes dangerous when automation and AI enter the loop.

Why This Breaks Down for AI

Humans cope with fragmented operational systems by filling in the gaps. When something looks off, an experienced operator knows where to look, which dashboard is misleading, which report is stale, and who to ask for context. Much of CloudOps still works today because critical understanding lives in people, conversations, and institutional memory.

AI does not have native access to any of that.

When operational context is spread across ticketing systems, CI pipelines, cost tools, security scanners, spreadsheets, and institutional memory, each acting as a system of record for its own domain, AI can only see isolated slices of reality. Each system is optimized for a valid goal. Reliability tools prioritize uptime. Cost tools prioritize spend. Security tools prioritize risk. None of them capture the full set of tradeoffs behind a decision.

In that environment, AI behaves exactly as designed. It optimizes what it can see and ignores what it cannot. The result is recommendations that are locally correct and globally conflicting.

One obvious response is to try to solve this by introducing a new, unified platform. A single system that claims to be the source of truth for infrastructure state, cost, risk, and ownership. In theory, it would replace existing systems of record and finally give both humans and AI a complete picture.

In practice, it cannot. To work, it would have to either fully replace every DevOps, FinOps, and security system already in place, or continuously reconcile data across all of them. Replacing them is unrealistic. The cost, disruption, and organizational resistance alone make rip-and-replace infeasible. Reconciliation is not much better. It still depends on humans to resolve conflicts, explain exceptions, and decide which signal takes precedence when goals collide.

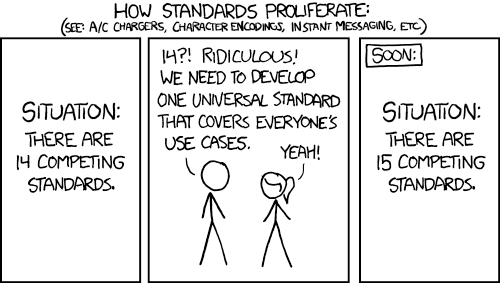

Standards – https://xkcd.com/927/

This is not a new problem. It is a recurring pattern in complex systems.

The implication is not that CloudOps needs another system of record to control decisions. It needs a way to observe how those decisions are made. Existing systems of record tell you what the environment looks like. They do not tell you how it got there.

What’s missing is a layer that can quietly capture what exists, what changes, the tradeoffs considered and the reasons behind those changes, without disrupting the tools teams already rely on.

These recorders act as unobtrusive listeners. They do not replace CI systems, cost platforms, or security tooling. They connect them by recording actions and decisions as they happen and linking them over time. The outcome is a decision trace that both humans and AI can reason over, without forcing a rip-and-replace of systems that already work.

IaC & GitOps: Where Decisions Become Enforceable

In most enterprises, the systems of record for CloudOps are already in place. Source control and CI systems like GitHub, GitLab, and Jenkins are where infrastructure definitions live, changes are reviewed, and pipelines enforce policy. Infrastructure as Code tools like Terraform, OpenTofu, and Pulumi, along with GitOps workflows, define how cloud resources are created, updated, and governed through those systems.

Together, these tools form the authoritative record of what exists in the cloud and how it is allowed to change. Without them, organizations can still operate infrastructure, but CloudOps becomes increasingly manual and harder to govern as scale and complexity grow.

This is where cloud decisions become real. Proposed changes move from ideas and discussions into versioned code, merge requests, and automated checks. At this boundary, non-deterministic reasoning is converted into deterministic, auditable execution. Over time, enterprises have learned to trust this model because it balances velocity with control and enables teams to operate at scale.

As AI enters CloudOps, this layer becomes the natural interface for automation. The goal is not for agents to bypass existing systems of record, but to operate through them. Agents propose changes by opening merge requests, provide rationale and tradeoffs during review, and trigger the same pipelines and policy checks that govern human-driven change. This creates a clear and familiar path for agents to earn trust within established workflows.

IaC and GitOps define the enforcement boundary where human judgment, organizational policy, and automation converge. AI reasons, humans approve, and Git enforces. That boundary makes safe automation possible today and creates a controlled path toward greater autonomy over time.

At the same time, these systems were never designed to capture the full operational context behind decisions. They enforce change effectively, but they do not explain why a change was made, how cost, risk, and reliability were weighed, or how decisions connect across teams and environments.

Once that missing context is layered on top of these systems of record, their role expands. Agents are no longer limited to proposing code changes in isolation. They can reason about live environments, recommend corrective actions, and coordinate with other agents that share the same operational truth, while still acting through the same trusted workflows. This is the path from AI that suggests changes to AI that safely operates cloud environments.

Preparing CloudOps for AI is not about replacing IaC, GitOps, or their underlying systems of record. It is about preserving them as the enforcement backbone and giving both humans and agents the context they need to act with confidence.

What It Means to Be AI-Ready in CloudOps

AI-ready CloudOps is not defined by how many models are deployed or by declared levels of autonomy. It is defined by whether operational decisions are visible, explainable, and consistent across teams and time. For operations leaders, readiness means strengthening the foundations that already govern change, IaC, GitOps, and existing workflows, while ensuring the context behind decisions does not disappear into tickets, meetings, or individual judgment. Teams that do this should not expect instant autonomy. What they should expect first is fewer repeated debates, faster alignment across reliability, cost, and risk, and a clearer operational picture they can trust. That clarity is what allows AI to progress from assisting humans to safely acting on their behalf, without increasing risk or eroding control.